Your Company Just Cut Its UX Team. Now What?

21% of companies laid off UX researchers in 2025. Here's how product teams can still catch critical UX issues without dedicated researchers.

WebSonic Team

Websonic

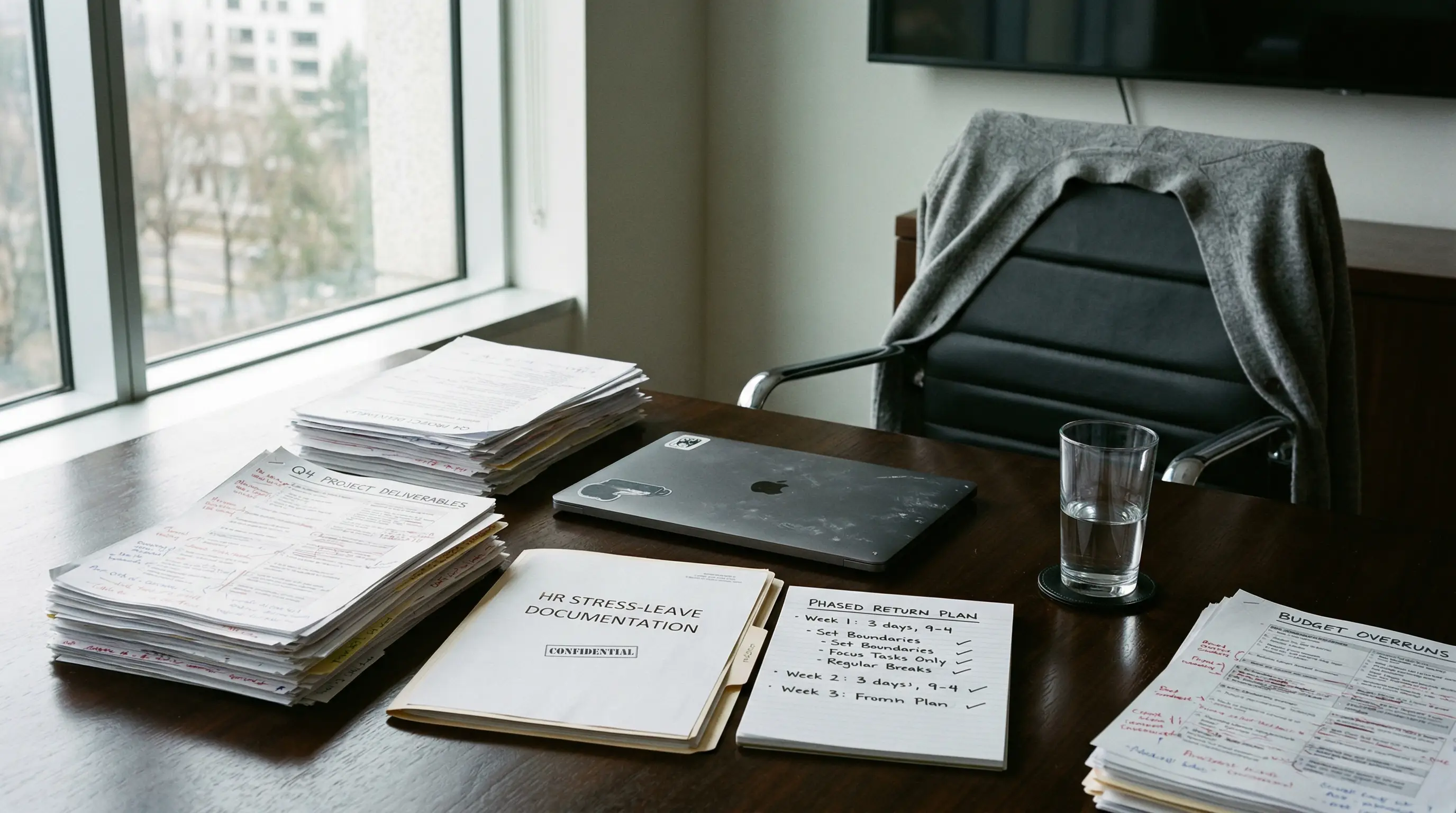

The email arrives on a Tuesday morning. "We're restructuring the UX Research team." Translation: your dedicated researchers are gone, and now the work falls to you.

Jump to what matters: what to do first · top challenges · what to automate · what AI can and cannot do · FAQ

If Your UX Team Was Cut, Start Here

If your UX team just got cut, don't try to replace full-scale research overnight. Start by automating the mechanical checks that catch obvious UX friction, use session evidence to prioritize what is actually hurting conversion, and reserve human time for the judgment calls AI still can't make.

| In the first 7 days after layoffs | Owner | Output |

|---|---|---|

| Audit homepage, signup, checkout, and mobile flows for obvious breakage | Product or design lead | One ranked list of friction by severity |

| Turn on repeatable automated website testing for the top user journeys | Engineering or QA owner | Screenshot-backed issues instead of ad hoc guessing |

| Review session recordings for the biggest drop-off path | PM or growth owner | 3-5 evidence-backed patterns worth fixing first |

| Keep one human-led usability review on the highest-stakes flow | Product + design | Judgment on trust, clarity, and business risk |

The fastest recovery path is not rebuilding the old research org chart. It is restoring coverage first, then judgment.

You're not alone. 21% of companies laid off UX researchers in 2025, consistent with 2024's numbers. Another 14% now report having zero dedicated researchers — up from just 6% a few years ago. Research Ops specialists are even scarcer: 62% of organizations have none, compared to 50% in 2023.

Yet here's the paradox: demand for user insights hasn't dropped. If anything, it's increased. 55% of product professionals reported demand for user research increased over the past year. Companies still want to build products users love. They just don't want to pay for the people who traditionally did that work.

So what happens now? Research doesn't stop — it shifts. The question is whether it shifts strategically or chaotically.

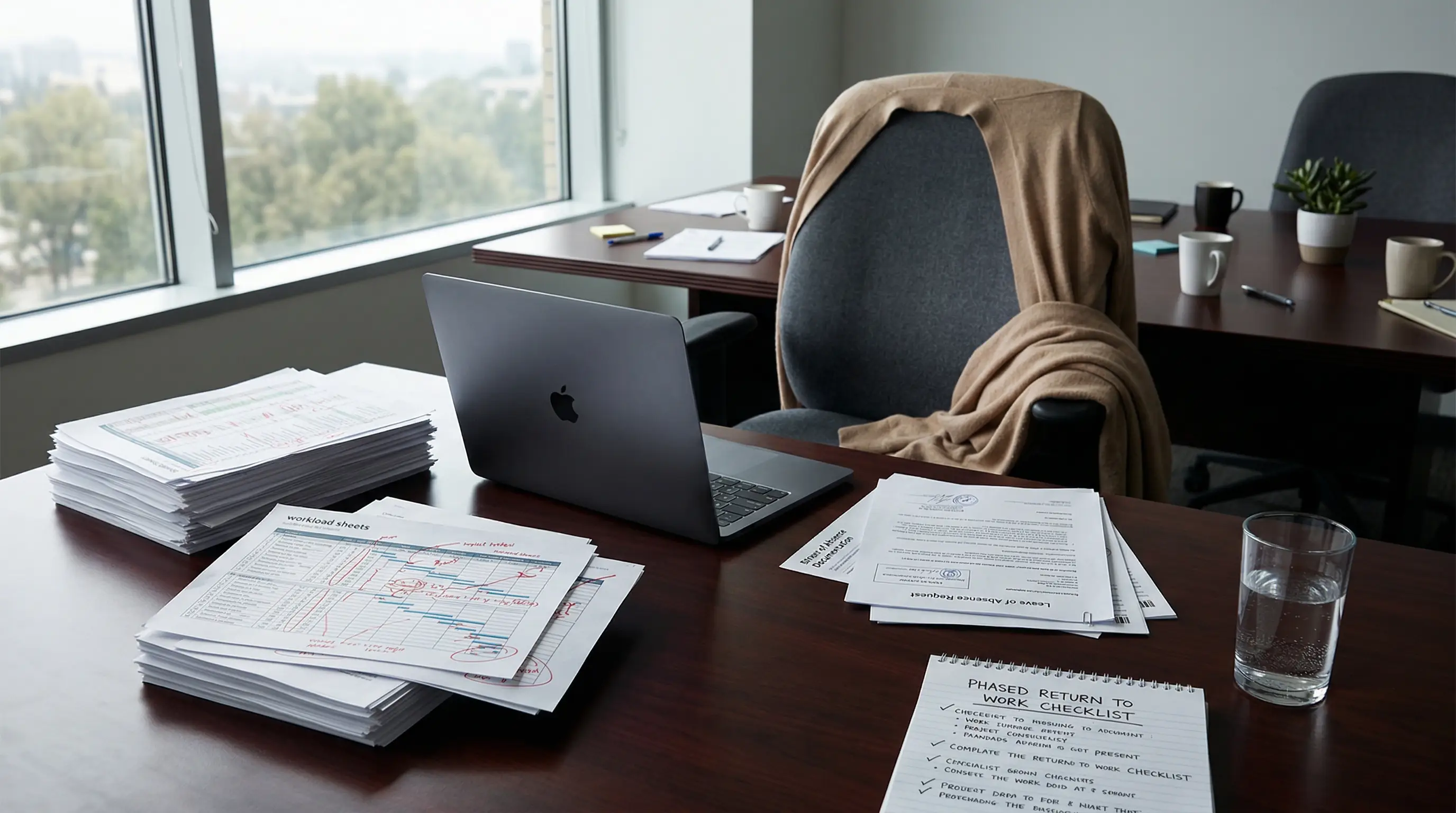

The New Reality: Research by Non-Researchers

The field has a term for this: PWDRs, or "People Who Do Research." For every dedicated researcher still employed, there are now 1 to 5 PWDRs conducting research on the side of their primary role.

These PWDRs are typically:

- Product managers (42% of teams)

- UX designers (70% of teams)

- Developers pulled into usability sessions

- Marketers running surveys

The problem? Most never trained for this. As one designer told researchers: "I feel like my design superiors expect me to 'just know' how to do UX research, and to make it an integral part of every project."

Expectations have increased. Support has not.

Why This Matters for Your Conversion Rate

Here's what happens when UX issues go undetected:

- Only 1% of users say e-commerce websites meet their expectations on every visit

- 17% of users abandon orders solely due to difficult checkout processes

- 40% of users leave if a site takes more than 3 seconds to load

- 88% of online consumers are less likely to return after a bad experience

The cost isn't theoretical. Forrester Research found that well-executed UX design can raise conversion rates up to 400%. Every dollar invested in UX returns $100 — a 9,900% ROI.

Without researchers catching these issues early, they slip into production. And fixing a problem in production costs 100x more than catching it in design.

The Top Challenges You Now Face

According to the 2025 State of User Research Report, PWDRs report three critical challenges:

1. Time and Bandwidth (63% of teams)

You already have a full-time job. Now you're expected to add research to it. Researchers in the same survey said their median output includes 2 mixed methods studies, 3 qualitative studies, and 1 quantitative study — in just six months. That's alongside your regular deliverables.

The result? Research gets rushed or skipped. Quick decisions replace informed ones.

2. Recruiting the Right Participants (48% of teams)

Finding people who actually represent your users is harder than it looks. You need the right demographics, the right behaviors, the right contexts. Researchers who primarily recruit external users report pain around cost and participant quality. Those using internal databases struggle with timelines and scheduling.

Without a research ops function or dedicated recruiting tools, this becomes a massive time sink.

3. Translating Results to Business Outcomes (42% of teams)

Raw usability findings don't automatically become business cases. You need to connect "users struggled with the checkout button" to "we lost $50,000 in revenue this quarter." That's a skill — one that dedicated researchers spend years developing.

Yet here's the pressure: 83% of researchers now rely on internal praise or qualitative signals to track impact. Only 21% of those who track impact are satisfied with their methods. Everyone feels the pressure to prove value.

What You Can Do About It

You can't become a UX researcher overnight. But you can implement systems that catch the most expensive problems without requiring research expertise.

Automate the Mechanical Work

The biggest time sinks in research are mechanical:

- Setting up tests

- Recruiting participants

- Recording sessions

- Transcribing conversations

- Tagging and categorizing issues

These don't require research judgment. They require logistics. And logistics can be automated.

Modern tools can:

- Crawl your site and identify every interactive element

- Generate test scripts based on common UX patterns

- Recruit participants from your actual user base

- Record and transcribe sessions automatically

- Surface patterns across multiple sessions

This doesn't replace research expertise. It removes the barriers that prevent non-researchers from doing research at all.

Focus on High-Impact, Low-Effort Tests

Not all UX research is equal. Some methods catch expensive problems with minimal effort:

Form analytics: If users repeatedly fail at specific form fields, you have a UX problem. This requires no moderated sessions — just data.

Session recordings: Watch where users hesitate, rage-click, or abandon. Patterns become obvious quickly.

First-click testing: Can users find the right starting point for common tasks? This single metric predicts task success with surprising accuracy.

Accessibility checks: Automated tools catch 30-50% of accessibility issues in seconds. The remaining issues matter, but the automated catches prevent lawsuits and expand your market.

These methods don't replace deep qualitative research. But they catch the obvious, expensive problems that currently slip through.

Build a Feedback Loop, Not a Research Department

You don't need a research department. You need a system that:

- Continuously surfaces UX friction

- Prioritizes issues by business impact

- Validates fixes before they ship

- Measures whether changes actually helped

This system can be lightweight. The key is consistency — regular pulses of insight rather than occasional deep dives.

| If your team can only protect one cadence | Run this | Why it matters after layoffs |

|---|---|---|

| Every release | Automated website testing on homepage, signup, checkout, and mobile | Restores baseline coverage so obvious regressions stop shipping silently |

| Every week | 30-minute review of session recordings from the top drop-off flow | Turns behavioral evidence into a short list of real friction instead of opinion-driven debates |

| Every month | One human-led usability review on the highest-stakes journey | Preserves the interpretation layer AI still cannot replace |

| Every quarter | Pattern review across repeated failures, support tickets, and conversion dips | Keeps the team focused on systemic UX debt instead of one-off bugs |

This is the minimum viable research loop for teams that lost dedicated UX headcount: coverage every release, evidence every week, human judgment every month.

Companies that integrate research into business decisions see 2.7x better outcomes than those who conduct research but rarely use it. Frequency and integration matter more than sophistication.

The AI Question

You might be wondering: can't AI just do this now?

80% of researchers now use AI tools, up 24 points from 2024. But sentiment is mixed — 41% view it negatively. Top concerns: hallucinations (91% worried) and erosion of critical thinking (63% worried).

AI excels at pattern recognition and automation. It struggles with:

- Understanding context

- Interpreting emotional reactions

- Prioritizing which issues actually matter

- Connecting findings to business strategy

The most effective approach isn't AI replacing human judgment. It's AI handling the mechanical work so humans can focus on the judgment. If your team needs the bigger operating model behind that split, read UX Research in 2026: Why AI Is Making Human Judgment More Valuable, Not Less for the clearest breakdown of what AI should own, what still needs people, and why synthetic users remain a narrowing tool rather than a substitute for reality.

The Hard Truth

The layoffs aren't necessarily wrong from a business perspective. Many companies over-hired researchers during COVID and are now correcting. 40% of organizations in one study have only been doing UX research for 4-6 years — it's a relatively young function.

But the need for user insight isn't going away. If anything, it's more critical as markets get more competitive and users get more demanding.

The companies that thrive won't be the ones who eliminated research. They'll be the ones who made research more efficient — who figured out how to get 80% of the value with 20% of the effort.

That's the challenge now facing every product team. The research function hasn't disappeared. It's been distributed to you.

The question is whether you'll do it well enough to matter.

UX Research Layoffs FAQ

Can product managers handle UX research after layoffs?

Yes, but only if the team narrows the job. Product managers can run lightweight usability checks, review session evidence, and prioritize friction in key flows. They usually struggle when they are expected to recruit participants, synthesize patterns across many sessions, and translate weak signals into a business case without support.

What research work should teams automate first?

Start with repetitive coverage work: automated website testing, accessibility checks, form monitoring, and repeatable pre-launch reviews. These are the fastest ways to catch expensive UX issues after headcount cuts because they reduce the manual auditing burden immediately.

What still needs human judgment after UX layoffs?

Teams still need humans to interpret nuance, decide which UX issues matter most, and connect findings to business outcomes. That's the gap between a surface-level website feedback tool report and an actual product decision.

How do small teams keep usability testing going without a research department?

Small teams do better with a lightweight loop than a fake replacement department: run website usability testing, review the top journeys weekly, validate fixes before launch, and keep one shared list of repeated friction patterns. Consistency matters more than ceremony.

Sources

- User Interviews — State of User Research Report

- Maze — Future of User Research Trends

- Baymard Institute — UX Statistics

- User Interviews — State of User Research 2024 Report

- Userlytics — The State of UX 2025

If you need a practical framework for catching issues between research cycles, start with automated website testing. If you're deciding when to use manual studies versus automation, read website usability testing: manual vs AI-powered.

WebSonic is an AI UX testing agent that helps teams catch critical usability issues without dedicated researchers. It runs automated tests, analyzes user flows, and surfaces the problems actually hurting your conversion rate.

Related Articles

UX Research in 2026: Why AI Is Making Human Judgment More Valuable, Not Less

New data from 500+ research professionals reveals how AI is reshaping UX workflows—and where human researchers are becoming indispensable.

$260 Billion in Abandoned Carts -- The UX Failures Behind the Largest Leak in E-Commerce

Baymard Institute analysis of 49 studies reveals the specific UX failures causing 70% cart abandonment. Data-backed fixes with CSS, Core Web Vitals targets, and payment optimizations that recover 20-35% of lost revenue.

Best Automated Website Testing Tools (2026): 7 Platforms Compared on Speed, Cost, and Coverage

A practical comparison of the 7 best automated website testing tools for 2026. See how Websonic, Playwright, Cypress, Selenium, and others stack up on coverage, maintenance, and real UX insight.

Ready to test your UX?

Websonic runs automated UX audits and finds usability issues before your users do.

Try Websonic free