Automated Website Testing Before A/B Testing: How to Find the $50K Button

Automated website testing helps teams find UX leaks before they waste time on A/B tests. Learn when to run website usability testing first and what to fix.

Websonic Team

Websonic

Automated website testing is the faster move when you suspect something obvious is suppressing conversion. Before you spend weeks setting up experiments, you need to know whether your CTA is hidden, your form is too demanding, or your trust signals disappear at the exact moment a user needs reassurance.

Every startup has the story. The button that was slightly too small. The form that asked for too much information. The CTA color that blended into the background. The fix that took 30 minutes and increased conversion by 40%.

The problem is not that these issues are hard to fix. The problem is that they are hard for the team that built the page to notice. You have looked at the interface so long that the friction feels normal.

Here is the useful distinction: A/B testing helps you choose between working options. Website usability testing helps you find the broken path first. If the funnel is leaking, experimentation can tell you which leak is slightly better. It cannot tell you that the bucket needs patching.

Quick verdict: if your team has obvious CTA, form, mobile, or trust issues, run automated website testing before you run another experiment. Use A/B tests after the flow already works.

When to use automated website testing vs. A/B testing

A/B tests are strongest once the flow already works. Automated website testing is stronger when the problem is still diagnosis.

Use automated website testing when:

- traffic is too low to wait for statistical significance

- launch velocity is higher than QA capacity

- you suspect obvious friction in forms, CTAs, navigation, or mobile states

- you need screenshot evidence a designer or developer can act on quickly

Use A/B testing when:

- the funnel already works and you are comparing two credible variants

- you have enough traffic to reach significance

- you are optimizing copy, hierarchy, or layout after the baseline UX is sound

The A/B testing barrier most teams ignore

That distinction matters because Websonic's highest-intent category is not generic CRO advice. It is automated website testing for teams that need to spot broken UX paths quickly. If your biggest constraint is not creativity but visibility, diagnosis beats experimentation.

A/B testing is powerful. The numbers prove it:

- 77% of firms globally now conduct A/B tests on their websites, according to VWO's roundup of A/B testing adoption data (source)

- Baymard still tracks average cart abandonment at roughly 70%, which is a reminder that many conversion leaks are structural, not cosmetic (source)

- In Baymard's checkout research, 19% of shoppers reported abandoning because they did not want to create an account (source)

But here's what those statistics hide: A/B testing optimizes between working versions. It doesn't find broken versions.

You can't A/B test your way out of a CTA that's buried below the fold on mobile. You can't split-test a checkout process that requires 12 fields before showing pricing. You can't experiment your way to success if your fundamental UX is flawed. If checkout is the part of the funnel leaking hardest, use our breakdown of silent conversion killers in checkout UX to prioritize hidden costs, forced accounts, and mobile payment friction before you run another experiment.

The companies seeing those 223% ROI figures? They started with UX audits, not A/B tests. They fixed the obvious problems first. Then they optimized. If you want the broader playbook behind those quick wins, start with our guide to automated website testing. And if you want the most common high-impact patterns those audits surface first, use our breakdown of website usability testing with heatmaps.

Real wins from obvious fixes

Let's look at what happens when companies fix fundamental UX issues before running experiments:

Microsoft Bing had a low-priority idea sitting in their backlog: slightly altering how ad headlines displayed. An engineer finally ran it as an A/B test. Result: 12% revenue increase worth over $100 million annually in the U.S. alone, according to Harvard Business Review's write-up of the experiment (source). The work took days. The idea had been sitting there, invisible to prioritization, because nobody had fresh eyes on the problem.

Google famously tested 41 shades of blue for hyperlink colors. Google UK managing director Dan Cobley said the winning shade produced an extra $200 million in annual ad revenue at Google's scale (source). But they could only run that test because their fundamental UX was already solid. You don't test button colors when your button is invisible.

True Botanicals used a mix of redesign work, qualitative studies, and experimentation to push site-wide conversion to 4.9% and generate an estimated $2 million ROI increase in the first year, according to Optimizely's case study (source). The point is not that one badge changed everything. The point is that teams get outsized gains when they fix visible friction before debating micro-variants.

HP built a broader experimentation program that ran 490+ campaigns and drove $21 million in incremental revenue, again in Optimizely's published case study (source). Their own examples span enrollment flows, category pages, and support surfaces — exactly the kind of journeys where weak UX can poison test results before the test even starts.

The pattern is clear: A/B testing is a magnifier. If your foundation is broken, you magnify brokenness. If your foundation is solid, you magnify success.

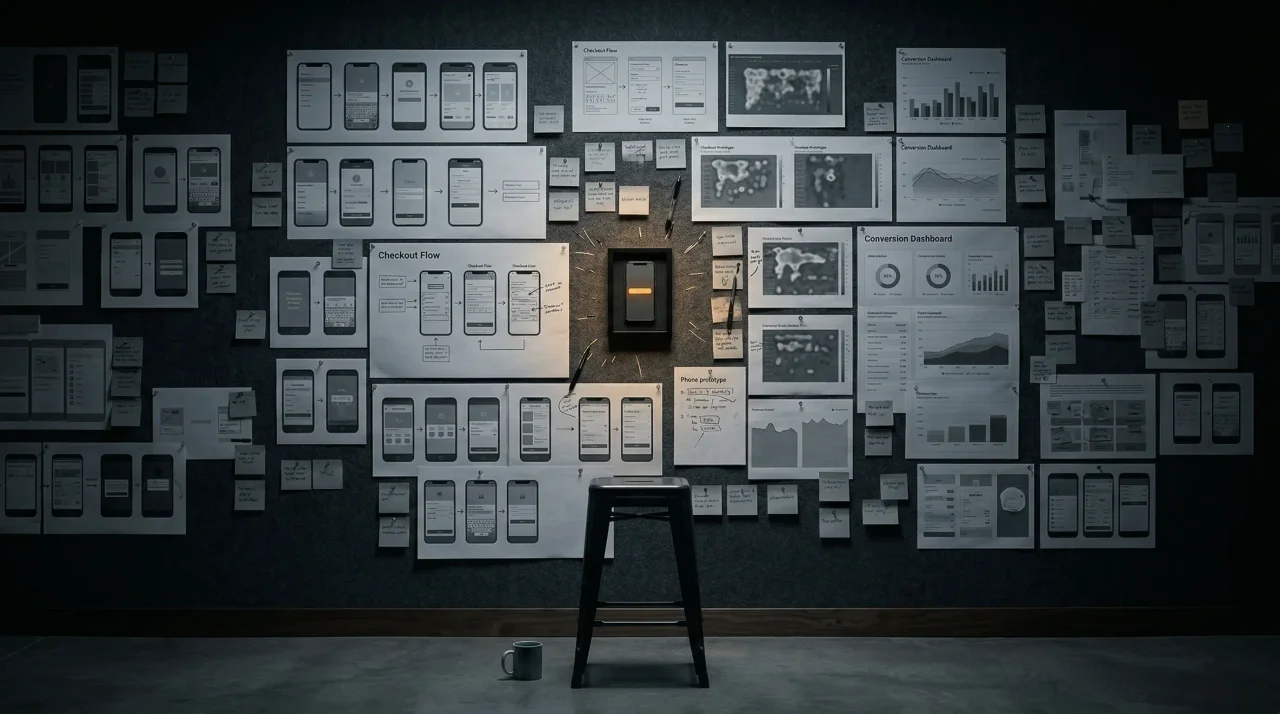

The $50K button archetypes

After analyzing hundreds of websites and reviewing thousands of UX audits, the same issues appear repeatedly. These are the "$50K buttons"—fixes that cost almost nothing but deliver outsized returns:

1. The invisible CTA

The problem: Primary action buried below the fold on mobile, or styled to look disabled, or competing with five other buttons.

The real cost: Every percentage point of conversion rate matters. If you're doing $500K ARR with a 2% conversion rate, moving to 3% means $250K in additional annual revenue. From a button placement.

The fix: Single primary action per page. Above the fold on all devices. High contrast. Active state styling.

2. The form wall

The problem: Asking for 12 fields of information before delivering value. Phone numbers required for email newsletters. Account creation mandatory for checkout.

The data: Baymard's checkout research found 19% of shoppers abandon when sites force account creation (source). That is the form-wall problem in one number: too much demanded before enough value is clear.

The fix: Progressive disclosure. Ask for email first. Collect additional information only when necessary. Guest checkout for e-commerce. For a deeper breakdown of where forms leak conversions, read our guide to form UX testing.

3. The trust gap

The problem: No security signals near purchase buttons. Missing refund policies. No social proof at decision points.

The data: User-generated content can increase conversion rates by 161% (Yotpo study of 200,000 e-commerce stores). Brooks Running saw an 80% decrease in return rates by adding personalized customer service offers at key decision points.

The fix: Payment badges near CTAs. Clear refund policies. Recent customer activity. Review prominence.

4. The navigation maze

The problem: Users can't find pricing, contact information, or key product details. Hamburger menus hiding critical paths. Information architecture designed for the company org chart, not user mental models.

The fix: Persistent navigation with clear labels. Pricing accessible within two clicks. Information architecture based on user research, not internal structures.

5. The loading limbo

The problem: No feedback on slow actions. Users clicking submit buttons multiple times. Abandoned carts during processing delays.

The fix: Progress indicators. Skeleton screens. Clear loading states. Error messages that explain what happened and what to do next.

A 48-hour fix queue for automated website testing findings

Once an audit returns a list of issues, most teams lose time arguing about what to ship first. Use this simple rule: fix blockers before persuaders, and fix persuaders before experiments.

| Finding from automated website testing | Severity | Effort | Ship first? | Why |

|---|---|---|---|---|

| CTA hidden below the fold on mobile | High | Low | Yes | If the action is not visible, no copy test matters |

| Required account creation before checkout | High | Medium | Yes | Baymard's abandonment data says this is structural friction |

| No trust copy near payment step | Medium | Low | Yes | Easy reassurance can unlock intent already present |

| Five competing buttons on one landing page | Medium | Low | Usually | Clarifies the primary action before you test variants |

| Headline variant A vs. B on a stable page | Low | Medium | Later | Good A/B testing target, but not the first fix |

That ordering is why automated website testing before A/B testing is practical for lean teams. It gives product, design, and engineering a queue they can actually execute in a sprint.

Why automated website testing beats A/B testing for early wins

A/B testing requires:

- Engineering resources to implement test variations

- Sufficient traffic for statistical significance (typically 10K+ visitors per variation)

- Weeks or months to reach conclusive results

- A working version to test against

UX audits require:

- A URL

- 5 minutes

- Fresh eyes

Not a precision model — a practical planning view for small teams deciding whether to diagnose first or optimize second.

The math is simple. If you have obvious UX issues—and most websites do—website usability testing finds them immediately. You fix them. You see results. Then, once your foundation is solid, you run A/B tests to optimize further.

A/B testing finds the best shade of blue. Automated website testing finds out you've been using red.

Broken funnel vs. optimized funnel

| Symptom | What an A/B test tells you | What automated website testing tells you | Fix owner |

|---|---|---|---|

| CTA under the fold on mobile | Which weak variant loses less | The primary action is literally not visible on first view | Design + frontend |

| Long signup form | Which field order performs slightly better | Users face too much cognitive load before value appears | Product + frontend |

| Missing trust near checkout | Whether a badge placement wins by a few points | Buyers do not get reassurance at the decision moment | Growth + design |

| Confusing navigation | Which label variant gets more clicks | Users cannot find pricing, contact, or proof at all | Product marketing + design |

This is why automated website testing before A/B testing is such a useful sequence for lean teams. You stop debating minor variants before you have repaired the obvious path.

The AI-powered shortcut

Traditional UX audits required human experts. Expensive human experts. Teams would spend $5,000-$25,000 on professional UX audits, wait weeks for results, then struggle to prioritize the 50+ issues identified.

AI changes the equation. Modern UX testing agents can:

- Explore like a user — navigating your site, clicking buttons, filling forms

- Capture screenshot evidence — visual proof of every issue found

- Score severity — ranking issues by impact, not just presence

- Suggest specific fixes — actionable recommendations, not vague observations

- Deliver results in minutes — not weeks

The output is a prioritized report: high-impact issues that are easy to fix, surfaced with visual evidence you can hand directly to your design or development team.

When to use UX audits vs. A/B testing

Use UX audits first:

- Before product launches

- After redesigns

- When conversion rates drop unexpectedly

- When you haven't done a systematic UX review in 6+ months

- When you're early-stage and don't have A/B testing infrastructure

Use A/B testing after:

- You've fixed obvious UX issues

- You have sufficient traffic for statistical significance

- You're optimizing between multiple working versions

- You need to validate subtle changes (copy, colors, layout variations)

Think of it this way: UX audits find the leaks in your bucket. A/B testing optimizes the bucket's shape. If you're pouring water into a leaky bucket, optimizing the shape is pointless.

The ROI calculation that convinces stakeholders

Here's the business case for UX audits in simple terms:

Cost of UX audit: $0-$249/month for AI-powered tools Cost of one critical UX issue: 10-40% conversion loss

If you're doing $50K MRR with a 2% conversion rate, a single issue costing you 20% of conversions means $10K in lost monthly revenue. Fix it in 30 minutes, and you've paid for years of UX auditing.

The math gets ridiculous quickly:

- Monthly visitors: 10,000

- Current conversion: 2% = 200 conversions

- Average order value: $100

- Monthly revenue: $20,000

- Fix UX issue, improve to 2.5%: 250 conversions

- Additional monthly revenue: $5,000

- Annual impact: $60,000

From one fix. That took an hour.

Now imagine finding 5 such issues in a single audit. That's the $50K button—multiple buttons, actually, each worth thousands in found revenue.

Composite workflow: From automated website testing to revenue in 48 hours

This example is a composite workflow built from common issues teams surface during website usability testing. It is useful because the pattern repeats constantly even when the brand names change.

A B2B SaaS company was struggling with trial sign-ups. They'd spent months optimizing their onboarding flow, running A/B tests on email subject lines, tweaking their pricing page copy. Results were flat.

They ran a UX audit. Three critical issues surfaced immediately:

- The CTA was below the fold on mobile — 60% of their traffic was mobile, and the primary "Start Free Trial" button required scrolling

- The form asked for company size before email — users hit a dropdown requiring thought before they could even start

- No trust signals near the CTA — no security badges, no "no credit card required" messaging, no customer logos

They fixed all three issues in a single afternoon:

- Moved CTA above fold

- Reversed form field order (email first, company size optional)

- Added security badges and "No credit card required" text

Results after one week:

- Trial sign-ups increased 43%

- Cost per acquisition dropped 31%

- The "failed" A/B tests from previous months suddenly showed positive results—they'd been optimizing a broken experience

The kicker? They'd been about to increase their ad spend to hit growth targets. The UX audit cost $79. The fixes took 4 hours. The results exceeded what they would have gotten from doubling their ad budget.

What automated website testing tools should actually show you

The category matters because not every tool that claims to do website testing is equally useful. If you need a launch-readiness companion to this diagnosis workflow, our pre-launch UX checklist shows what to validate before traffic hits a newly shipped page. A serious automated website testing tool should show you:

- Screenshot evidence tied to the exact page state and breakpoint

- Severity ranking so teams know what to fix first

- Reproduction steps instead of vague commentary

- Mobile and desktop path coverage because hidden friction often appears on only one device class

- Recommended fixes that bridge discovery to execution

That is the commercial difference between a generic analytics dashboard and a tool that actually helps teams ship better UX. If you want a deeper breakdown of the tooling tradeoffs, compare our guides to AI website analyzers, automated website testing, website usability testing: manual vs AI-powered, website feedback tools, and our category split on agentic testing vs. AI-assisted testing. And if you are actively comparing vendors, the new 5-minute buyer test inside AI website analyzer: what it finds that your team misses gives product, design, and engineering a faster way to separate screenshot-backed tools from report-only noise.

Where a website feedback tool fits — and where it does not

A website feedback tool is useful when you already know where friction might live and want users to describe it in their own words. It can confirm that pricing felt vague, the CTA felt risky, or the form asked for too much too soon. But a feedback widget will not reliably tell you that the primary button was hidden below the fold on one mobile breakpoint, or that a validation loop made the form feel broken before users had language for what happened.

That is why the stack matters:

- a website feedback tool helps you collect reactions and language from users

- a UX testing tool helps you compare workflows and choose the right layer for diagnosis

- automated website testing helps you spot the visible blockers before they quietly drain traffic

If you are deciding between categories instead of individual products, start with our broader guide to choosing a UX testing tool. If you already have qualitative comments coming in but still cannot see the actual point of failure, pair them with session recording analysis so behavior and feedback reinforce each other.

Automated Website Testing FAQ

What is the difference between automated website testing and A/B testing?

Automated website testing is a diagnosis layer. It looks for broken or weak UX paths — hidden CTAs, overloaded forms, missing trust, mobile friction, and navigation problems. A/B testing is an optimization layer. It compares two credible versions after the underlying path already works.

When should a small team run automated website testing first?

Run automated website testing first when traffic is low, launch velocity is high, or the team suspects obvious friction but does not yet know where it lives. In those cases, waiting weeks for an experiment is slower than finding the issue directly.

What should an automated website testing tool return?

A serious automated website testing tool should return screenshot evidence, reproduction steps, severity ranking, and fix recommendations tied to the exact page state. If the output only gives charts without telling the team what broke and where, it is not useful for execution.

Can automated website testing replace human usability testing?

No. Automated website testing is strongest for repeatable detection and fast triage. Human usability testing is still better when you need to understand motivation, interpretation, and emotional hesitation. The best workflow is to use automated testing to surface obvious blockers, then use human research on the pages and journeys that still need interpretation.

Building a UX-first optimization culture

The companies seeing 223%+ ROI from optimization don't treat UX as a one-time project. They build systematic processes:

Monthly UX audits: Schedule 30 minutes monthly to run fresh audits on critical pages. New issues emerge as you add features, change copy, or update designs.

Pre-launch checklists: Never launch a new page or feature without a quick UX audit. Catch issues before they cost you conversions.

Post-redesign validation: Major redesigns always introduce unexpected issues. Audit immediately after launch to find and fix them quickly.

Competitive monitoring: Audit competitor sites quarterly. Learn from their UX mistakes and wins.

A/B test preparation: Run UX audits before any major A/B testing initiative. Fix the obvious issues first, then test optimizations.

The bottom line

A/B testing is essential for mature optimization programs. But it's not where you start. You start with fresh eyes on your existing experience, finding the $50K buttons hiding in plain sight.

The businesses winning at conversion optimization follow a simple playbook:

- Audit first — Find the obvious issues

- Fix the foundation — Repair what's broken

- Then optimize — Run A/B tests on working versions

Skip step 1, and you're optimizing a broken experience. Invest in step 1, and you often find you don't need as many A/B tests—the wins are obvious once you can see them.

Your $50K button is probably on your site right now. You just need fresh eyes to find it.

If you want a more concrete list of the checkout and mobile friction patterns that quietly suppress revenue, read $260 billion in abandoned carts: the UX failures behind the largest leak in e-commerce. And if you are deciding where AI audits fit relative to human research, our guide to website usability testing: manual vs AI-powered breaks down the tradeoffs.

Try UX Tester free — AI-powered UX audits in 5 minutes. Find your $50K button.

Related Articles

AI Website Analyzer: What It Finds That Your Team Misses

An AI website analyzer finds UX friction, mobile issues, and conversion blockers that traditional QA misses before they cost you users.

Automated Website Testing: What It Catches Before Users Bounce

Automated website testing catches usability issues, checkout friction, and mobile UX problems before they cost conversions.

UX Testing Tool: How to Choose the Right One in 2026

A UX testing tool should help you catch usability issues before launch. Here is how to compare manual, behavior, and AI-first options in 2026.

Ready to test your UX?

Websonic runs automated UX audits and finds usability issues before your users do.

Try Websonic free